How do you measure software quality?

Welcome to the first post in Qualetics’ Software Intelligence Series! These short posts will be shared every Monday, Wednesday, and Friday and will focus on ways to derive and communicate insights that help you proactively manage your software quality and customer experience. A common question for any organization that relies on their software to manage at least part of their customer experience is “How is our software doing?” Here’s how we answer that question at Qualetics.

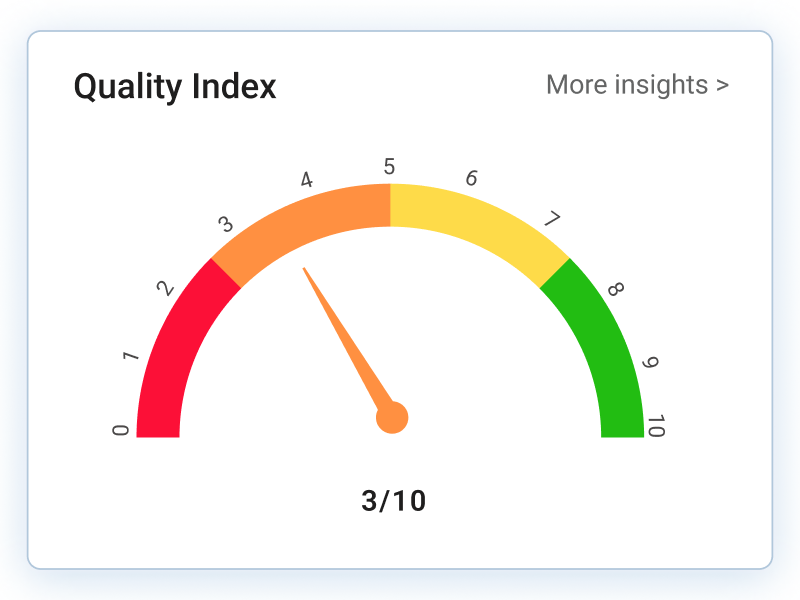

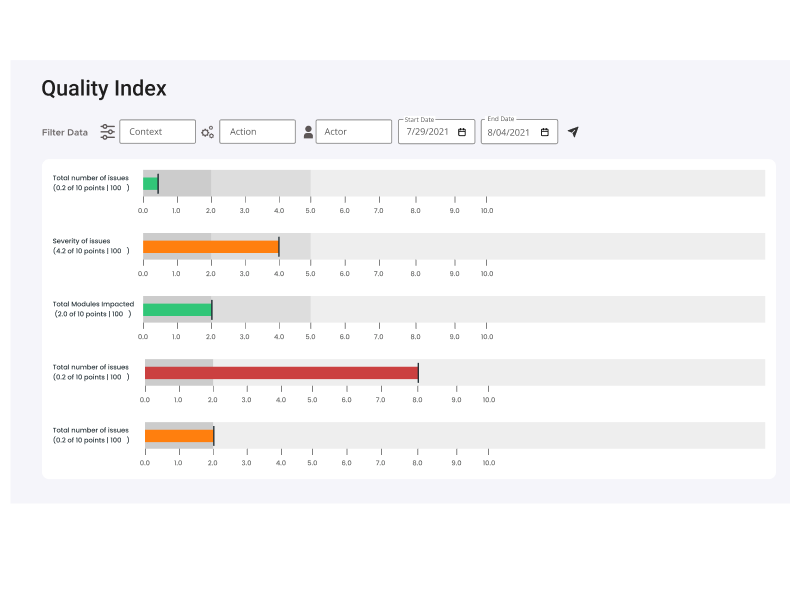

Ideally, you could answer that with an objective, fact-based, simple-to-understand measure. The “Quality Index" gauge above is a visualization we’ve adopted to display on a scale of -5 to +5 how our customer’s software is doing (SaaS software, mobile apps, website). This score is derived from the following five factors weighted in descending order: (Anticipating your question “where do these come from?”, these are actual examples taken from the implementation of Qualetics’ AI-based software quality plug-ins. Our description offers the logical framework for how you can derive similar decision-support by extracting them yourself or implementing our plug-ins).

Error Types: Of the errors that are occurring in your software experience, how many are considered critical, how many are major, and how many are minor? The score above indicates a software experience that is heavily weighted toward minor errors.

Users Impacted: Of all the users of your software, how many are actually impacted by the errors that are occurring? In this case, a relatively low number of the overall user population.

Error Frequency: This indicates how frequently the errors are occurring. Again, this software is performing quite well with a relatively low-frequency rate.

Context Impact: “Context” is the portion of the user experience that is impacted by the prevalence of errors and how important that portion of the user experience is to the software’s purpose. For instance, if this were an eCommerce-focused website this may indicate that the errors that are occurring impact the checkout process. So though the errors are minor and don’t happen often, they may be occurring at a critical part of the user experience.

Number of Issues: This is a measure of the total number of unique, identified errors. If the errors that were occurring were analyzed to be the same, fixing only a few things would correct most or all of the errors, this would be closer to +5. The more numerous the closer to -5 this score would be.

We find this provides a well-balanced framework for assessing software quality. And our analytics and AI algorithms assure that the data generating the insights is objective.

Our next two posts in the series, one Wednesday and one Friday will dive deeper into these five-quality metrics. Let us know what you think in the comments and please like or share this post if you find it interesting!